How Microsoft Foundry is Making AI Agents a Business Reality

Table of Contents

Introduction

As an enterprise AI consultant, one of the most consistent patterns I see is that AI projects rarely fail because the models are weak. They fail because the surrounding systems are not designed to support AI agents operating inside real business workflows.

Microsoft Foundry was created to address this gap.

With the general availability of the Foundry Agent Service in May 2025, Microsoft shifted its focus from showcasing AI capabilities to operationalizing Microsoft Foundry AI agents as governed, production-ready systems. The platform is built for organizations that need agents to do more than respond to prompts. They need them to access enterprise data securely, use approved tools, follow business logic, and operate reliably over time.

From a delivery standpoint, this is a meaningful shift. Instead of manually stitching together models, APIs, security, and orchestration, Foundry provides a unified runtime where agents are treated as first-class applications. Models are interchangeable, tools are permissioned, data access is controlled, and agent behavior is observable.

This marks a move away from experimental AI and toward systems that can support finance, operations, customer service, and supply chain workflows at scale. The value of Microsoft Foundry is not novelty. It is structure.

How Does Microsoft Foundry Build Enterprise-Ready AI Agents?

From a delivery perspective, the challenge with AI agents has never been intelligence. It has been coordination. Models, tools, data access, and governance often live in separate layers, making agents fragile once they leave experimentation.

Microsoft Foundry approaches this differently. It treats agent development as an industrial process rather than a creative experiment.

Think of Foundry as a modern factory for building intelligent agents. Instead of a loosely connected workshop, it provides a structured assembly line where each stage adds a required enterprise capability. The Foundry Agent Service governs this process end to end, ensuring agents are composable, permissioned, and production-ready.

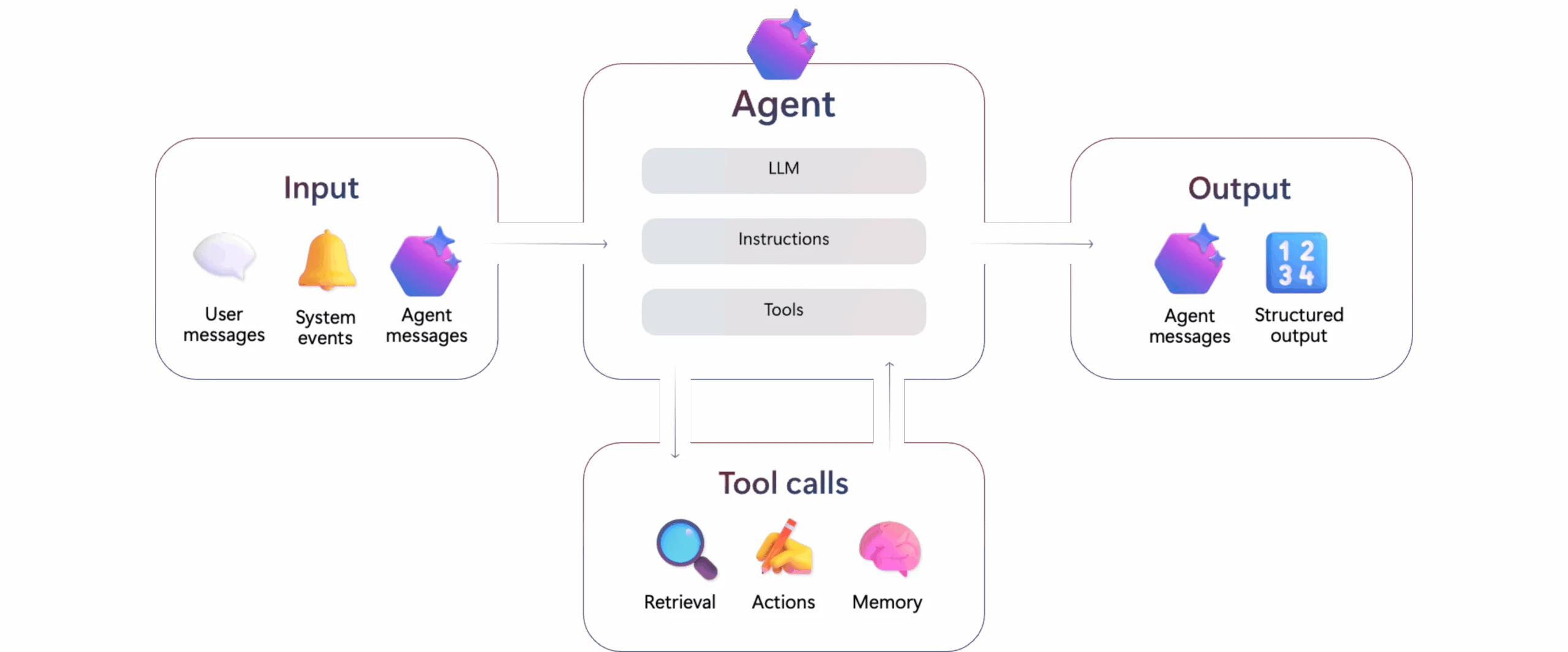

An enterprise agent is not just a language model. It is a system component with a defined role, approved tools, controlled data access, and a secure execution environment. Here is how those components come together.

Station 1: The Reasoning Core (Models)

Every AI agent begins with a reasoning engine. In practice, enterprises rarely benefit from a single-model strategy.

Microsoft Foundry provides access to a catalog of more than 11,000 models, allowing teams to select the most appropriate model per task. This includes Microsoft-native models, Azure OpenAI offerings such as GPT-4o, and leading third-party models from Anthropic, Cohere, and Meta (Llama).

What makes this usable at scale is the Model Router.

Rather than hard-coding model choices, the router dynamically selects the best-fit model for each request based on performance, quality, and cost. In controlled testing, this approach has delivered cost reductions of up to 60 percent. More importantly, it prevents teams from overengineering simple tasks while preserving quality for complex reasoning.

Station 2: Execution and Knowledge (Tools and Grounding)

An agent that can reason but cannot act adds limited business value.

Microsoft Foundry equips agents with governed tools that allow them to access knowledge and execute actions inside enterprise systems.

Knowledge grounding is handled through Foundry IQ, the evolution of Azure AI Search. Instead of static indexing pipelines, retrieval becomes part of the agent’s reasoning loop. Agents can securely reference internal sources such as SharePoint, Microsoft Fabric, and OneLake, as well as controlled public sources through Bing Search and Bing Custom Search.

This matters because accuracy and traceability are non-negotiable in enterprise workflows.

Action execution is where Foundry moves beyond assistive AI. Through deep integration with Azure Logic Apps, agents gain access to more than 1,400 enterprise connectors. This enables agents to not only identify an issue, but act on it.

In practice, this means an agent can:

- Create or update records in systems like Salesforce or SAP

- Trigger workflows in Dynamics 365

- Execute code or automate browser-based tasks

- Interact with custom APIs using OpenAPI or the Model Context Protocol (MCP)

These actions are permissioned, auditable, and aligned with existing enterprise controls.

Station 3: Orchestration and Coordination

Once agents can reason and act, they must be coordinated.

The Foundry Agent Service manages the full agent lifecycle, including tool invocation, memory handling, and state management. The most significant advancement here is multi-agent orchestration.

Instead of forcing one agent to handle everything, Foundry enables teams to design agent systems composed of specialized roles.

- Connected Agents allow point-to-point task delegation between agents

- Multi-Agent Workflows introduce a stateful orchestration layer that coordinates agents across long-running processes such as onboarding, auditing, or exception handling

These workflows support durability, error recovery, and structured handoffs. A visual workflow builder allows teams to design and reason about these systems declaratively, rather than embedding orchestration logic deep in code.

Built for Developers, Structured for the Enterprise

A recurring failure point in enterprise AI initiatives is the gap between experimentation and delivery.

Microsoft Foundry is designed to close that gap. Developers can work in familiar environments using SDKs for Python, C#, JavaScript, and Java. The platform integrates cleanly with open-source frameworks such as LangChain, Semantic Kernel, AutoGen, CrewAI, and LlamaIndex, allowing teams to reuse existing patterns and code.

At the same time, Foundry enforces enterprise structure. Tool permissions, data access, and deployment paths are consistent from development through production.

Agents can be deployed directly to Microsoft Teams and Microsoft 365 environments, reducing friction between development and adoption. This short path from implementation to real usage is often what determines whether an AI initiative delivers value or stalls.

- Faster time to production: Move from prototype to governed deployment without rebuilding agent architectures.

- Lower operational risk: Agents inherit existing identity, access control, and governance models.

- Environment consistency: The same tooling and deployment patterns apply across dev, test, and production.

- Higher adoption: Native deployment into Microsoft Teams and Microsoft 365 embeds agents into daily work.

- Sustainable ownership: Open frameworks and standard SDKs reduce lock-in while preserving control.

Build AI Agents That Hold Up in Production

Many organizations are experimenting with AI agents. Fewer are deploying them with the governance, data controls, and operational structure required for real business workflows. The difference is rarely the model. It is the architecture, orchestration, and delivery approach behind it.

Request a ConsultationThe Governance Imperative: Building AI You Can Trust

Microsoft Foundry AI agents are built with governance at the core, not layered on later. Identity, access control, observability, and cost management are part of how agents are created and run, not optional add-ons. That matters because once AI agents move beyond demos and start interacting with enterprise data and systems, trust becomes the real constraint.

Foundry treats AI agents as first-class operational systems. Each agent runs with defined permissions, controlled data access, and clear visibility into how it reasons and acts. This enables the deployment of agents into real business workflows without bypassing the security and compliance models that enterprises already rely on.

From experience, this has been a constant struggle in earlier AI projects. Models worked. Proofs of concept looked promising. But governance was usually addressed too late, after agents were already touching sensitive data or automating decisions. At that point, trust issues slowed adoption or stopped deployments entirely.

Foundry’s governance-first design reflects those hard lessons. It aligns how AI agents operate with how enterprises already think about risk, accountability, and control.

- Governance is embedded into Microsoft Foundry AI agents by default

- Identity, permissions, and observability are enforced at runtime

- Trust and control are prerequisites for scaling AI beyond experimentation

Identity-Driven Security by Design:

Microsoft Foundry AI agents are built around identity rather than anonymous service access. Each agent is assigned a first-class identity in Microsoft Entra, allowing permissions, access, and lifecycle policies to be managed the same way they are for human users.

In practice, this addresses a gap I have seen repeatedly in earlier agent implementations. Without a clear identity model, access becomes hard to control and behavior becomes difficult to audit. Foundry’s identity-first approach enforces least-privilege access by default, limiting agents to only the data and systems required for their role.

In regulated environments, that shift is often the difference between an agent being approved for production or stopped before it ever gets there.

Observability, Safety, and Operational Oversight:

In enterprise deployments, lack of visibility is one of the fastest ways to lose stakeholder confidence.

I have seen AI initiatives stall simply because teams could not explain how an agent arrived at a decision or what systems it touched along the way. Microsoft Foundry directly addresses this through a centralized control plane.

Microsoft Foundry AI agents provide deep observability across reasoning steps, tool usage, cost, and safety signals. Built-in tracing using OpenTelemetry allows teams to inspect agent behavior in detail, while integrated guardrails reduce risks such as prompt injection or unsafe outputs.

Integration with Microsoft Defender for Cloud extends this visibility into continuous threat detection and security posture management, which is essential for enterprise acceptance.

Cost and Compliance Control at Scale:

Another pattern I consistently see is cost and compliance being addressed too late.

Pilot environments scale quietly, usage grows, and suddenly AI spend and data exposure become executive concerns. Microsoft Foundry AI agents include governance controls that prevent this scenario.

Teams can enforce cost boundaries through quotas and automated shutdown policies, while compliance requirements are supported through integration with Microsoft Purview. This allows organizations to track regulatory obligations, enforce data policies, and protect intellectual property from day one.

From experience, these controls are not optional. They are prerequisites for sustained AI adoption.

Further Reading: How to Create a Winning AI Business Strategy for Your Business

Turn AI Agents into Trusted Operating Systems

Most organizations are still experimenting with AI agents in isolation. The real challenge begins when those agents need to operate inside governed environments, interact with enterprise data, and support real workflows without increasing risk. Getting that transition right requires more than strong models. It requires the right architecture, controls, and delivery experience.

Request a ConsultationConclusion: From AI Assistants to AI Collaborators

Across client engagements, the most successful AI programs share one trait. They stop treating AI as a tool and start treating it as part of the operating model.

With Microsoft Foundry and the Foundry Agent Service, AI moves from isolated assistance to structured collaboration. Microsoft Foundry AI agents reason, act, and coordinate inside governed systems, alongside human teams.

The organizations that succeed are the ones that design for trust early. When AI is built with identity, observability, and control from the start, adoption follows naturally.

The shift to AI-driven operations is already underway. When you are ready to operationalize Microsoft Foundry AI agents inside real business workflows, AlphaBOLD can help bring that experience into your environment with the discipline enterprise systems require.

Frequently Asked Questions

Microsoft Foundry AI agents are autonomous, production-ready components that combine reasoning, tools, memory, and governance to operate inside real business workflows. Unlike standalone AI models that only generate responses, agents can take actions, access enterprise data securely, and coordinate across systems within a governed runtime designed for enterprise use.

Governance is built directly into how Microsoft Foundry AI agents are created and run. Each agent operates with identity-based access control, permissioned tools, observability, and policy enforcement across development and production. This ensures traceability, security, and compliance without introducing separate AI governance layers.

AlphaBOLD focuses on the delivery side of enterprise AI adoption. Rather than treating agents as isolated features, AlphaBOLD helps organizations design agent roles, governance models, data boundaries, and orchestration patterns aligned with real business workflows. This approach reduces risk, avoids stalled pilots, and enables Microsoft Foundry AI agents to operate securely and reliably in production environments.