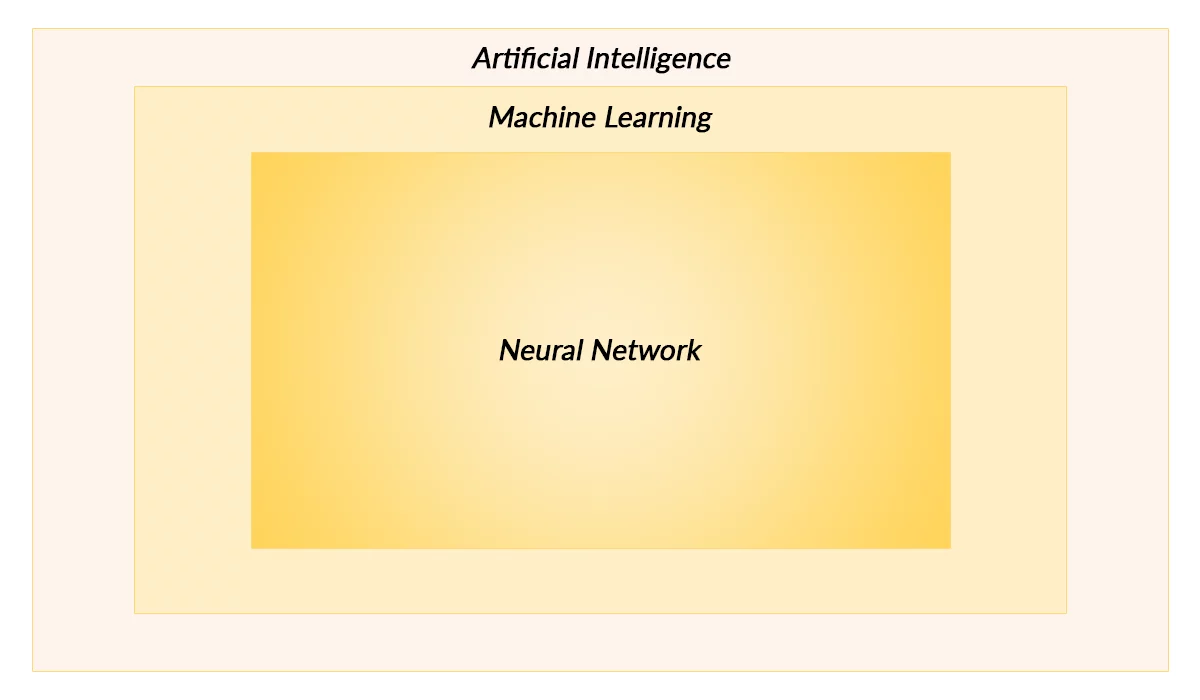

Artificial Intelligence:

It is an overarching computer science discipline that deals with making machines think like humans, having consciousness and the ability to adjust to the changing environment by taking decisions creatively all by themselves after learning, over time.

AI isn’t new, as a matter of fact it was coined in 1956 by Professor John McCarthy at Dartmouth. He gathered a group of mathematicians and scientists to see if machines could learn by way of trial and error to develop formal reasoning. The roots of AI however, can also be traced back to Alan Turing’s work.

AI has always remained an idea ahead of itself but in the last few years, the following factors have led AI to become the next big thing in practical terms and has investors pouring money in:

- Machines generating huge amounts of data that can be- and is- used to develop key insights and intelligence

- Advances in the processing speed and the availability of seemingly endless resources on demand (such as compute, memory, network etc.) are enabling the processing of big data at an unprecedented scale

- The ability to patch/update the software regularly also helps in making the solutions more mature and reliable over time

- The explosion of technologies, frameworks, content and most importantly the means of collaboration especially in the open source world, amply backed up by the proprietary tech sector. This factor is driving the innovation in the field of AI

Note: The aspect of AI that is related to the creative decision-making ability of machines is known as general AI or Human Level AI. It is largely experimental and theoretical- quite honestly, we are not there yet. I will be covering ML on a higher level in this article.

Machine Learning:

Also known as “Applied AI”, it is a subset of AI that also happens to be the most commercially viable. It relates to training machines in doing certain tasks that get better at executing, over time.

This is different from general-purpose AI where a machine learning solution lacks ingenuity. Let’s take an example here, a system trained on playing chess will only be able to play chess and over the period of time it will get better at this “acquired” skill by “learning” from different datasets provided but its model will remain the same. That system will fail while attempting checkers because it is not designed to understand this new and “different” game. In other words, ML solutions may give an impression of being intelligent but essentially that is not completely true, they are just efficiently producing the results from the same model that they got trained on and cannot think outside the box.

Some of the most popular applications of ML are:

- Virtual personal assistants: Apps such as Siri, Cortana, Alexa and Google Now. are helping us out in conducting our daily routine tasks

- Customer Support: Conversationalist bots replacing call center professionals across industries

- Fraud detection: Online credit card fraud predicted to soar past $32 Billion by 2020. ML is now being widely employed as a countermeasure to detect fraudulent transactions

- Spam filtering: Happens to be one of the earliest use cases, where notable email services use ML to determine whether the given email goes into spam or inbox. They get it right most often than not 🙂

- Healthcare: Currently it is being used for diagnosis and prognosis and has started showing dramatic results especially in early cancer detection. The prescriptive aspect is still in question though, but work is underway in making ML based solutions more reliable at prescribing

- Autonomous surgery is another area which is gaining a lot of traction in the medical science community. IEEE is keeping score of the AI v Doctors across various disciplines.

- Social Media and eCommerce: The application of ML is ubiquitous across these fields, some of the most popular ones are:

- Facial recognition

- Chatbots

- Recommendations

Artificial Neural Networks (ANNs):

Stems from Machine Learning. The idea here is to make machines learn the way humans do using artificial neural networks. The components of a human brain that make one think and take smart decisions are special cells called neurons. These neurons (which are billions in numbers) talk to each other through electrical impulses enabled by synapses, this communication is conducted over a network made up of dendrites and axons.

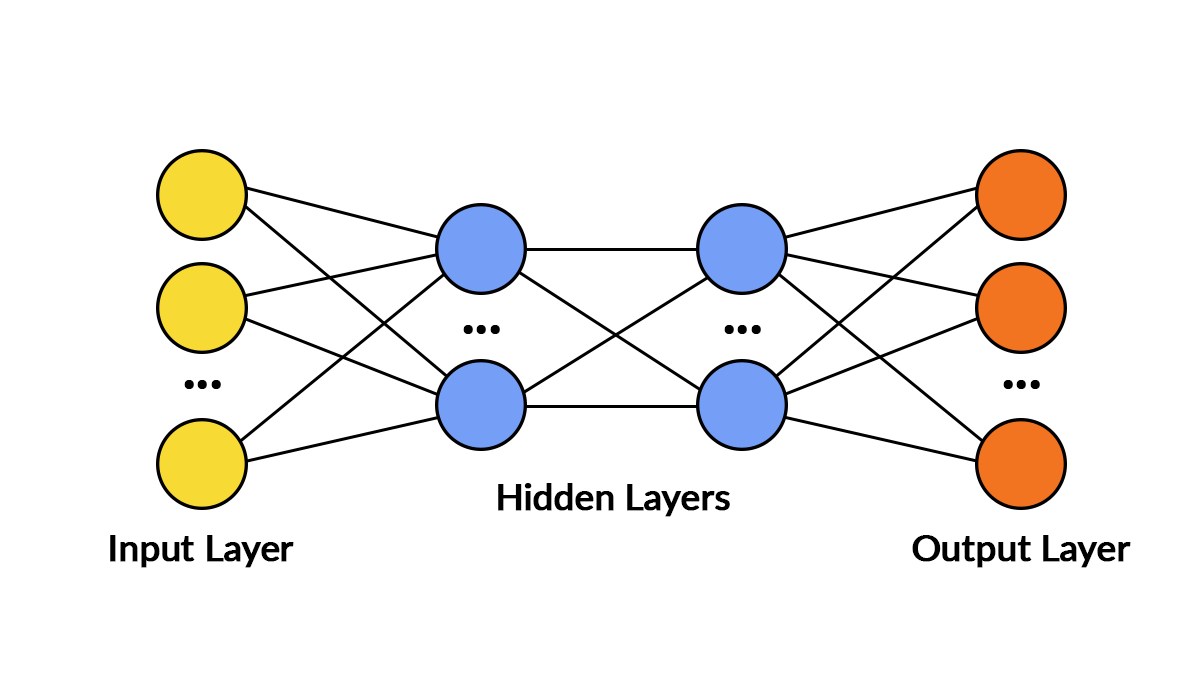

In computer science, an artificial neural network or neural net for short is composed of nodes (like the neurons in the brain) and the networks that connects them (like synapse connecting the brain cells). Simply put, there are input nodes, output nodes and the “hidden” layers in between, all connected. The hidden layer (could be more than one) is made up of processing nodes. The information flows between these nodes (just like the transmission of chemical/electrical signals from one neuron to another in the human brain). The decision of which node the information should be sent to, from the given node, is based on weights, biases and threshold values. These values are set completely randomly at start but over time they are adjusted/fine-tuned/trained using techniques such as backpropagation

ANN is an excellent methodology if the intent is to find patterns in data that is too big or complex or both for human programmers (e.g. finding patters in millions of photos). Besides, programmers are trained to think logically and use assertions to prove/disprove the properties of the given program. ML changes the way the problems are tackled and on a philosophical level it means, finding patterns/making observations in an everchanging environment by running experiments and using statistical data to learn and fine tune the assertions rather than use logic to get by.

The learning process however is slow, but artificial neural networks can run 24/7 at peak performance ?

We at AlphaBOLD specialize in implementing smart solutions that can help your business in gaining the competitive advantage, by way of automating your manual business processes.

Contact us today, we will be more than happy to assist!