Introduction

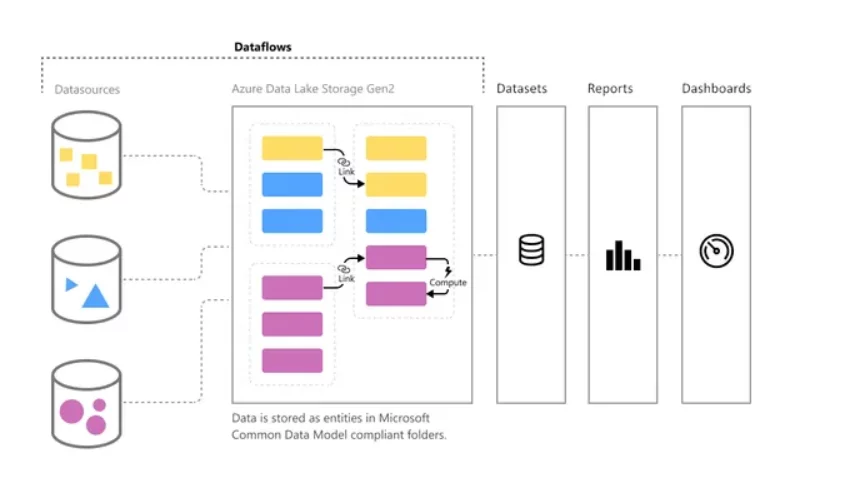

Building an enterprise-grade data integration pipeline is a lengthy process and requires many architectural considerations and guidelines. Often, businesses evolve so quickly that the IT team can’t keep up with the demands of the rapidly changing business environment. To address this issue, Microsoft has created a fully managed data preparation tool for Power BI that both developers and business users can use to connect to data sources and prepare data for reporting and visualizations.

What is Dataflow?

Power BI Dataflow

Ready for Data-Driven Success?

Get a Personalized Demo on Power BI Dataflows and Enhance Your Business Insights Now!

Request a DemoHow Does it Work?

Benefits of Dataflows

- Dataflows allow self-service data preparation, making the process more manageable. It requires no IT or developer background.

- Dataflows are capable of advanced transformations.

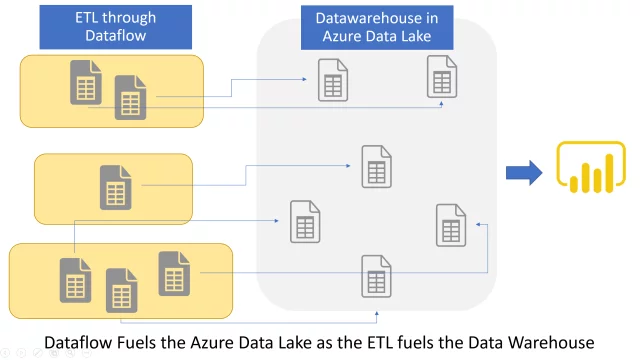

- Allows you to create a single version of truth; a centralized data warehouse which can be used for all reporting solutions.

- In a Power BI solution, a dataflow separates the data transformation layer from the modelling and visualization layer.

- Rather than being split across numerous artifacts, the data transformation code can be stored in a single dataflow.

- A dataflow creator only needs Power Query skills. The dataflow creator can be part of a team that produces the whole BI solution or operational application.

- A dataflow is product agnostic. It’s not just a feature of Power BI; you can access its data from a variety of different tools and services.

- Runs in the cloud and requires no infrastructure management.

- Allows incremental Refresh (PBI Premium required)

Use Cases

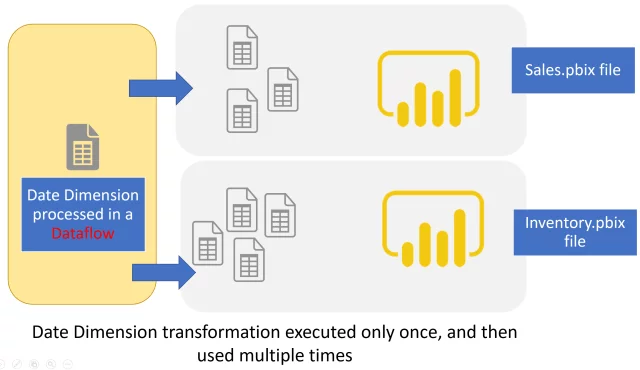

A Single Power Query Table in Multiple Power BI Reports:

Whenever you feel the need to use one Power query table in multiple Power BI reports, Dataflows should always be your choice. You only need to perform transformations on a single table and that can be reused for all your reports that need the same logic to be implemented. Re-using tables or queries across multiple Power BI files is one of the best use cases of Dataflow.

Learn more about AI-Driven Predictive Analytics via Power BI Insights

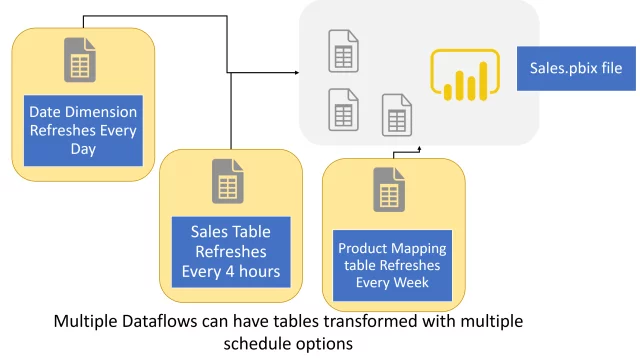

Different Data Source with Different Schedule Refreshes

Let’s suppose that you have a dataset that includes tables coming from different data sources and with different schedule options. One table needs to be refreshed once a week and the other to be refreshed once a day. So, for this you will have to refresh the report at maximum frequency, which is once a day, even though the other table doesn’t require daily refreshments.

Dataflows can run transformations on a different schedule for every query. You can create multiple dataflows for multiple tables and then schedule them according to your requirements. For the above-mentioned example you can create two dataflows transforming data for those two tables separately and then load them in the destination table. These dataflows can be scheduled according to the given requirements making the solution easier and more efficient.

Build Data Warehouse

Transform your Analytics Capabilities!

Learn how advanced Dataflows in Power BI can drive impactful decisions. Contact us to explore customized solutions.

Request a DemoConclusion

Explore Recent Blog Posts